AI Content Provenance and Digital Watermarking: How C2PA, Content Credentials, and SynthID Are Restoring Trust in Media in 2026

- Internet Pros Team

- April 30, 2026

- Networking & Security

Two years after photorealistic generative AI flooded the open web, the question that defines digital media in 2026 is no longer can the model fake it? — that war is lost — but can the viewer verify it? A coalition of standards bodies, camera makers, AI labs, and platforms has converged on an answer: content provenance and digital watermarking. Backed by C2PA Content Credentials, Google SynthID, Adobe, Microsoft, OpenAI, Meta, and the camera firmware of Leica, Sony, Nikon, and Canon, the new stack cryptographically signs every capture, records every edit, embeds invisible AI watermarks, and gives platforms a verifiable answer to "is this real?" — restoring a thread of trust that the deepfake era nearly severed.

Why Provenance Became the 2026 Trust Layer

By late 2024, generative image and video tools could produce footage indistinguishable from a smartphone clip on first viewing. Election deepfakes, cloned-voice CEO scams, AI-fabricated war imagery, and synthetic celebrity endorsements all spilled into mainstream feeds. The detector-arms-race approach — train a classifier to spot fakes — kept losing ground to better generators. The industry pivoted to a different model: instead of asking is this fake?, prove what is real by attaching tamper-evident metadata at the moment of creation, and let everything unsigned be treated as unverified by default.

That shift was codified by the Coalition for Content Provenance and Authenticity (C2PA), a Joint Development Foundation project led by Adobe, Microsoft, the BBC, Intel, Sony, Nikon, Truepic, OpenAI, Google, and Meta. C2PA 2.1, ratified in 2025 and now an ISO standard (ISO/IEC 22144), defines the Content Credentials manifest — a signed JSON-LD bundle bound to an image, video, or audio file that records the device or model that produced it, every edit applied, and the cryptographic chain of signatures linking those steps. By 2026, Content Credentials is the de facto provenance language of the internet.

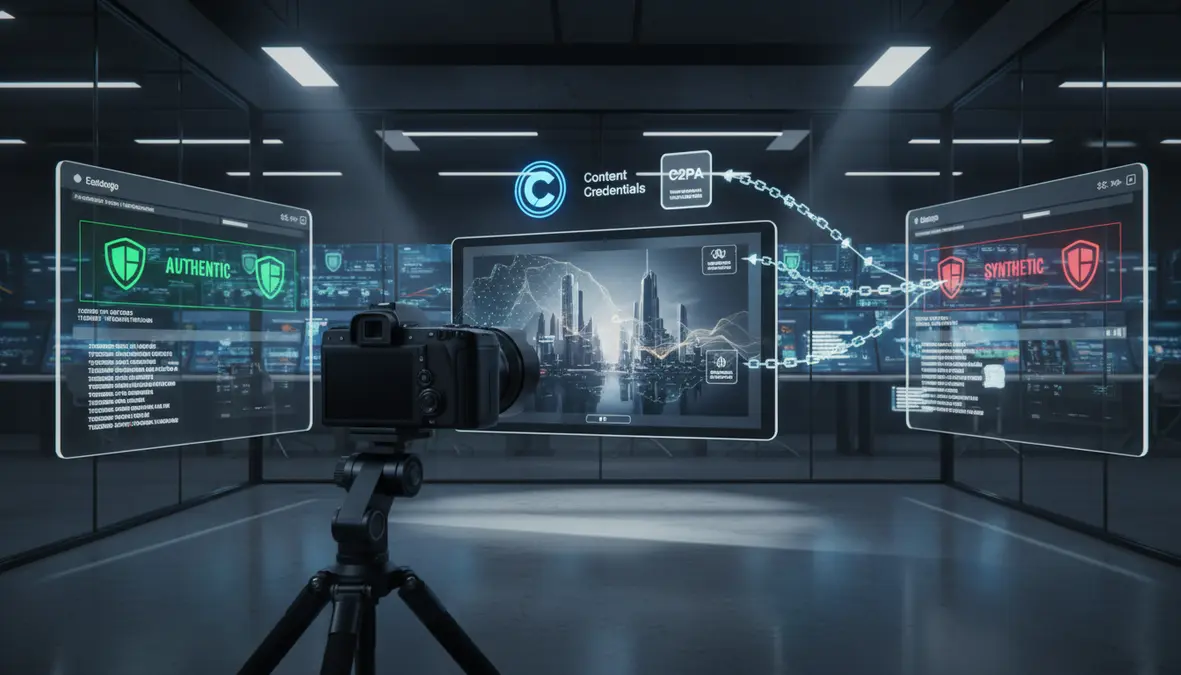

Cryptographic Signing

Every capture device or AI model signs the file with a hardware-rooted key. Tampering breaks the signature and is instantly detectable by any C2PA-aware viewer.

Invisible Watermarks

SynthID, AudioSeal, and SynthID-Text embed perceptually invisible patterns into pixels, audio samples, and tokens — surviving compression, screenshots, and re-encoding.

Edit-Aware Manifests

Content Credentials records every edit — crop, color grade, generative fill, voice clone — so a viewer can audit the full chain from capture to publication.

The 2026 Provenance Stack

A modern provenance pipeline layers two complementary defenses: active manifests that ride alongside the file, and passive watermarks embedded into the content itself. Each protects against different failure modes.

| Layer | What It Does | Leading Implementations |

|---|---|---|

| Capture Attestation | Hardware-signed proof a photo or video came from a real sensor at a real time and place | Leica M11-P, Sony Alpha 1 II, Nikon Z9, Canon EOS R1, Truepic SDK, Apple ProRAW signing |

| AI Generation Labels | Marks output as machine-made and identifies the producing model | OpenAI Sora 2, DALL·E 3, Midjourney v7, Adobe Firefly, Stability AI, Google Imagen 4 |

| Invisible Watermarking | Embeds detectable patterns into pixels, audio, or tokens that survive normal handling | Google SynthID (image, audio, video, text), Meta AudioSeal, Microsoft Content Integrity |

| Edit Provenance | Records every transform applied to the asset and re-signs the manifest | Adobe Photoshop & Premiere, DaVinci Resolve, Capture One, CapCut Pro, Figma, Canva |

| Verification & Display | Surfaces credentials to readers, journalists, and platforms | Content Credentials icon, contentcredentials.org Verify, BBC Verify, AP, Reuters, TikTok, YouTube, Meta |

Where Provenance Is Already Shipping

In the eighteen months since C2PA 2.1, provenance has moved from press releases into the default behavior of mainstream tools.

News and Photojournalism

The BBC, AP, Reuters, AFP, and The New York Times now publish photos and video with embedded Content Credentials, and run editorial guidelines that reject any unsigned wire image of a major news event. Leica, Sony, Nikon, and Canon ship cameras with C2PA-signing firmware that captures GPS, timestamp, and edit history into a hardware-secured manifest. When a frontline image lands in a wire-service desk, an editor can open Content Credentials Verify and see the unbroken chain back to the camera that took it.

Generative AI Output

OpenAI signs every Sora 2 video, DALL·E 3 image, and ChatGPT image generation with a Content Credentials manifest plus an invisible SynthID-style watermark. Google does the same across Imagen 4, Veo 3, and Lyria 2 using SynthID. Adobe Firefly, Microsoft Designer, Stability AI, and Black Forest Labs FLUX have followed. As of 2026, the largest commercial image and video models all label their output by default — a baseline the EU AI Act's Article 50 transparency rules now require for any provider serving European users.

Platforms and Distribution

TikTok, YouTube, Meta, LinkedIn, and Pinterest read incoming Content Credentials and SynthID watermarks at upload, and surface labels like "AI-generated" or "Captured by camera" directly in the feed. Reddit and Bluesky have added provenance hooks to moderator tooling. Cloudflare offers a provenance-passthrough mode that preserves manifests across CDN delivery so credentials survive social re-shares — a long-standing weak point that survived only when major intermediaries agreed to stop stripping metadata.

"You can't fight synthetic media with detection alone — the generators always win the arms race. The durable answer is provenance: prove what is real, label what is made, and give every viewer a way to check. That is what Content Credentials does."

Watermarking Got Real

Manifests can be stripped — by a screenshot, a re-encode, or a malicious actor. So the second line of defense is invisible watermarking that lives inside the content itself.

- SynthID for images and video: Google DeepMind's technique modifies image pixels and video frames in patterns invisible to the eye but reliably detected by SynthID Detector even after compression, cropping, color grading, and re-encoding.

- SynthID-Audio and AudioSeal: Meta's AudioSeal and Google's SynthID-Audio embed inaudible signals into AI-generated speech and music — critical defenses against voice-cloning fraud and synthetic music laundering.

- SynthID-Text: Token-level statistical watermarking shifts the sampling distribution of an LLM in ways a detector can recognize but a reader cannot, helping educators and platforms flag machine-written text without breaking fluency.

- Capture authenticity proofs: Truepic and Leica use secure-enclave-signed timestamps and sensor-noise fingerprints to bind a photo to a specific physical device — useful for insurance claims, court evidence, and humanitarian documentation.

The Hard Problems Still Open

Provenance is not a finished product. Three problems define the 2026 frontier.

First, screenshot survival. A signed manifest does not survive a screen-capture; the photo of the photo is now a clean, unsigned file. Watermarks help — SynthID survives screenshots — but no system survives perfect re-photography of a high-resolution display. The fix is partial: pair watermarks with platform-side detection that flags unsigned content of news interest for review rather than treating it as authentic.

Second, open-source generators. Closed providers like OpenAI and Google can guarantee every output is watermarked. Open-weight models — Llama, FLUX, Wan, HunyuanVideo, Stable Diffusion forks — cannot, since anyone can recompile without watermarking. The realistic answer is layered defense: require labels at the platform layer, watermark closed-provider output, and apply heuristic detection to the unsigned remainder.

Third, privacy of provenance. A signed photo can leak the photographer's identity, the exact GPS coordinates, and the device serial. Journalists, activists, and whistleblowers need provenance that proves authenticity without unmasking the source. C2PA 2.1 added redactable assertions and zero-knowledge identity proofs, but adoption is still early. Expect 2026-2027 to bring practical anonymous-but-verified workflows for sensitive reporting.

What Builders, Newsrooms, and Brands Should Do Now

The economics of provenance flipped in 2025: not signing your content is now the costly choice, because unsigned media is increasingly treated as suspect by platforms, advertisers, and AI search engines.

A Practical Provenance Playbook for 2026

- Sign at capture. Adopt C2PA-enabled cameras and capture SDKs (Truepic, Project Origin) for any photo or video that may be used in news, advertising, or evidence.

- Preserve through editing. Use Adobe Photoshop, Premiere, DaVinci Resolve, or Capture One in Content Credentials mode so edit history is signed rather than stripped.

- Label generative output. If you produce AI imagery, video, or voice, leave watermarking on by default and disclose model usage in the public manifest.

- Verify at intake. Newsrooms, platforms, and ad networks should add Content Credentials Verify into editorial and moderation workflows — and treat unsigned high-stakes content as unverified.

- Preserve metadata in pipelines. Audit your CDN, CMS, and social publishing tools to make sure they do not strip C2PA manifests on upload or transcode.

For most of the internet's history, the default assumption about a photo or recording was that it was real unless proven otherwise. Generative AI inverted that assumption and shook the foundation of journalism, advertising, courts, and elections. The 2026 answer is not nostalgia for the old default — it is a new one: a verifiable provenance layer that runs from capture device to viewer screen, paired with invisible watermarks for the moments when the manifest gets stripped. The companies, newsrooms, and creators that adopt it early will spend the next decade with their credibility intact while the rest fight an unwinnable detection war. Trust is being rebuilt for the AI era, and provenance is the foundation it stands on.