Federated Learning: How Privacy-Preserving AI Is Reshaping Data Collaboration in 2026

- Internet Pros Team

- March 23, 2026

- AI & Technology

In January 2026, a consortium of 14 major hospitals across Europe trained a cancer-detection AI model that outperforms any single institution's diagnostic system — without a single patient record ever leaving its originating hospital. The model, built using NVIDIA FLARE's federated learning framework, achieved 96.3% accuracy on rare tumor classification by learning from 2.4 million medical images distributed across institutions in Germany, France, the Netherlands, and Sweden. No central database was created. No patient data was transferred. No privacy regulation was violated. This is federated learning in action — and in 2026, it is transforming how organizations across healthcare, finance, telecommunications, and government build AI systems that are both powerful and privacy-compliant by design.

What Is Federated Learning?

Federated learning (FL) is a machine learning approach where an AI model is trained across multiple decentralized devices or servers holding local data samples, without exchanging or centralizing that data. Instead of moving data to the model, federated learning moves the model to the data. Each participant trains a copy of the shared model on its own local dataset, then sends only the model updates — mathematical gradients or weight adjustments — to a central aggregation server that combines them into an improved global model. The raw data never leaves its source, fundamentally solving the tension between AI capability and data privacy.

Google pioneered federated learning at scale in 2017 with its Gboard keyboard, training next-word prediction models across millions of Android devices without uploading users' typing data. By 2026, the technology has matured from a research curiosity into an enterprise-grade infrastructure powering mission-critical AI systems across the world's most regulated industries. The core promise remains the same: organizations can collaboratively build AI models that are far more accurate than anything they could train alone — while keeping sensitive data exactly where regulations, policies, and common sense dictate it should stay.

| Aspect | Traditional ML | Federated Learning |

|---|---|---|

| Data Location | Centralized in one server/cloud | Remains on local devices/servers |

| Privacy Risk | High — data must be shared and stored centrally | Low — only model updates are transmitted |

| Regulatory Compliance | Requires data sharing agreements, anonymization | Privacy-preserving by architecture |

| Data Diversity | Limited to what one organization collects | Learns from diverse, distributed datasets |

| Scalability | Bottlenecked by central storage and bandwidth | Scales horizontally across participants |

| Single Point of Failure | Central server breach exposes all data | No central data repository to breach |

How Federated Learning Works in Practice

A federated learning training cycle — called a "round" — follows a structured sequence. The central server initializes a global model and distributes it to participating nodes (hospitals, bank branches, mobile devices, or edge servers). Each node trains the model on its local data for several epochs, producing updated model weights. These weight updates are encrypted and sent back to the central server, which aggregates them — typically using Federated Averaging (FedAvg) or more advanced algorithms like FedProx or SCAFFOLD — into a new global model. The improved model is redistributed to participants, and the cycle repeats until the model converges. At no point does raw data move between participants or to the central server.

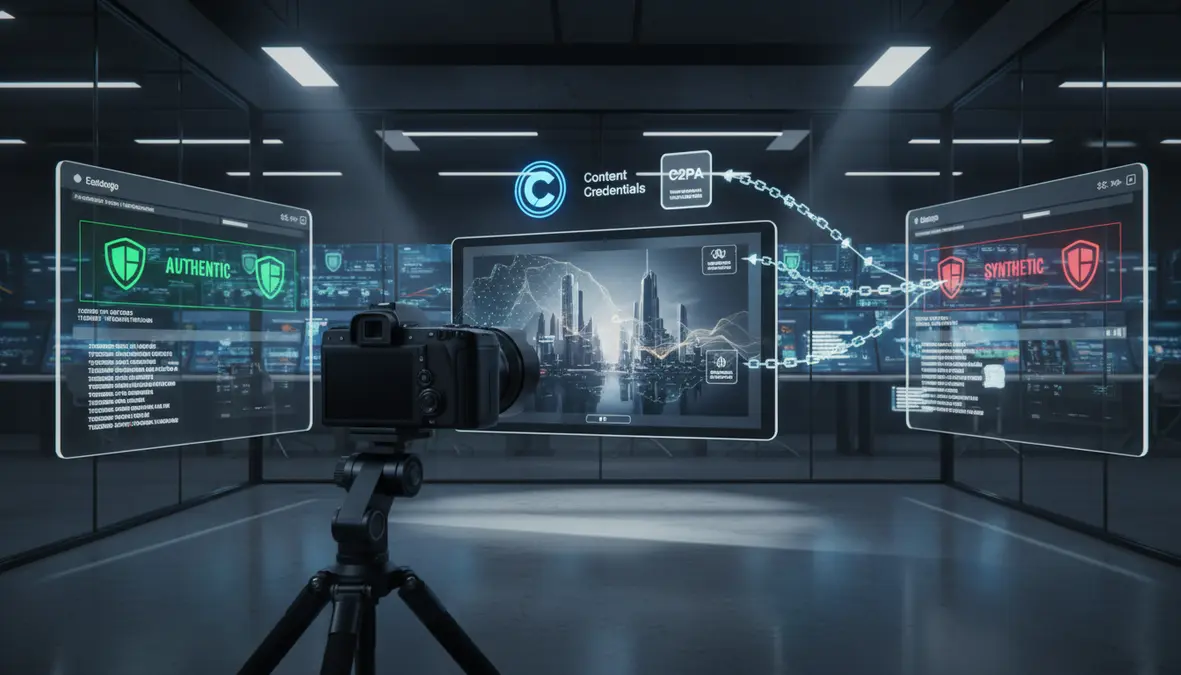

Differential Privacy

Differential privacy adds calibrated mathematical noise to model updates before they leave each participant, providing a formal guarantee that no individual data point can be reverse-engineered from the aggregated model. Apple uses differential privacy in its on-device learning for Siri, keyboard predictions, and health insights — ensuring that even if model updates were intercepted, they reveal nothing about any specific user's data.

Secure Aggregation

Secure aggregation uses cryptographic protocols to ensure the central server can only see the combined aggregate of all participants' updates — never any individual participant's contribution. Google's implementation uses secret sharing and masking techniques so that even a compromised aggregation server cannot isolate any single participant's model update from the encrypted sum.

Trusted Execution Environments

Hardware-based TEEs (like Intel SGX, ARM TrustZone, and AMD SEV) provide isolated, encrypted enclaves where model aggregation occurs. Even the server operator cannot inspect the computation happening inside the enclave. Microsoft Azure's confidential computing platform now offers FL-as-a-Service with TEE-backed aggregation for enterprise customers.

Industry Applications Driving Adoption

Federated learning has moved decisively from research labs into production deployments across the most data-sensitive sectors of the global economy. In healthcare, the technology is enabling breakthroughs that were previously impossible due to patient privacy regulations. The HealthChain consortium, launched in late 2025, connects 47 hospitals across 12 countries to collaboratively train diagnostic models for cardiovascular disease, diabetic retinopathy, and rare cancers — producing models that see more pathological diversity than any single institution ever could. Roche, Pfizer, and Novartis are using federated learning to train drug-response prediction models across clinical trial sites without pooling patient-level genomic data, accelerating precision medicine while maintaining full HIPAA and GDPR compliance.

In financial services, JPMorgan Chase, HSBC, and Deutsche Bank participate in federated anti-money laundering (AML) networks where each bank trains fraud detection models on its own transaction data. The aggregated model identifies suspicious patterns across institutions — such as layered transactions that span multiple banks — without any bank revealing its customers' financial activity to competitors or regulators beyond what is legally required. Mastercard's federated fraud detection system, deployed in 2026, reduced false positive rates by 40% compared to single-institution models while processing over 150 billion annual transactions.

"Federated learning is not just a privacy tool — it is a competitive advantage. Organizations that master collaborative AI without data sharing will build models that are categorically superior to anything achievable in isolation."

The 2026 Federated Learning Technology Stack

The tooling ecosystem for federated learning has matured dramatically. Open-source frameworks now make it accessible to organizations of all sizes, while cloud providers offer managed services that abstract away the infrastructure complexity.

| Framework | Developer | Best For | Key Feature |

|---|---|---|---|

| NVIDIA FLARE | NVIDIA | Healthcare, enterprise cross-silo FL | Privacy-preserving workflows with TEE support |

| Flower (flwr) | Flower Labs | Research, heterogeneous environments | Framework-agnostic (PyTorch, TensorFlow, JAX) |

| PySyft | OpenMined | Privacy-first FL with differential privacy | Remote data science on data you cannot see |

| Azure FL | Microsoft | Enterprise managed FL-as-a-Service | Confidential computing with TEE aggregation |

| TensorFlow Federated | Cross-device FL at massive scale | Designed for millions of mobile devices | |

| IBM FL | IBM | Regulated industries, hybrid cloud | Built-in compliance and audit trails |

Challenges and the Road Ahead

Federated learning is not without challenges. Data heterogeneity — where different participants have non-identically distributed (non-IID) data — remains the most significant technical hurdle. A hospital specializing in pediatric oncology and one focused on geriatric care will have vastly different data distributions, which can cause the global model to converge slowly or perform unevenly across participants. Algorithms like FedProx, SCAFFOLD, and personalized federated learning (where each participant maintains a locally adapted version of the global model) are addressing this challenge with increasing success.

Communication efficiency is another concern. Transmitting large model updates across networks — especially in cross-device scenarios with millions of smartphones — requires compression techniques like gradient sparsification, quantization of updates, and selective participation where only a subset of devices contribute to each training round. Google's production FL system for Android achieves a 100x reduction in communication costs through these optimizations.

On the regulatory front, the EU AI Act, which took full effect in 2026, explicitly recognizes federated learning as a privacy-enhancing technology (PET) that can satisfy data minimization requirements under GDPR. The U.S. National Institute of Standards and Technology (NIST) published its Federated Learning Guidelines in early 2026, providing a standardized framework for evaluating the privacy guarantees of FL deployments — giving enterprises the regulatory clarity needed to adopt the technology at scale.

What This Means for Your Business

Federated learning represents a paradigm shift in how organizations think about data and AI. Companies that have been unable to leverage AI because their data is too sensitive, too regulated, or too distributed across organizational boundaries now have a viable path forward. Healthcare providers can build diagnostic AI that learns from the collective experience of thousands of institutions. Financial firms can detect fraud patterns that span their entire industry. Retailers can build recommendation models that incorporate insights from supply chain partners without exposing proprietary sales data. And any organization subject to GDPR, HIPAA, CCPA, or other privacy regulations can deploy AI with confidence that their architecture is compliant by design — not just by policy.

At Internet Pros, we help businesses evaluate and implement privacy-preserving AI solutions including federated learning architectures, differential privacy systems, and secure multi-party computation frameworks. Whether you need to build a cross-organizational AI collaboration, deploy on-device learning for your mobile application, or ensure your existing AI systems meet evolving privacy regulations, our team has the expertise to guide your strategy and implementation. Contact us today to explore how federated learning can unlock the full potential of your distributed data while keeping privacy and compliance at the forefront.