World Models: How Spatial AI Is Teaching Machines to Simulate Reality in 2026

- Internet Pros Team

- April 24, 2026

- AI & Technology

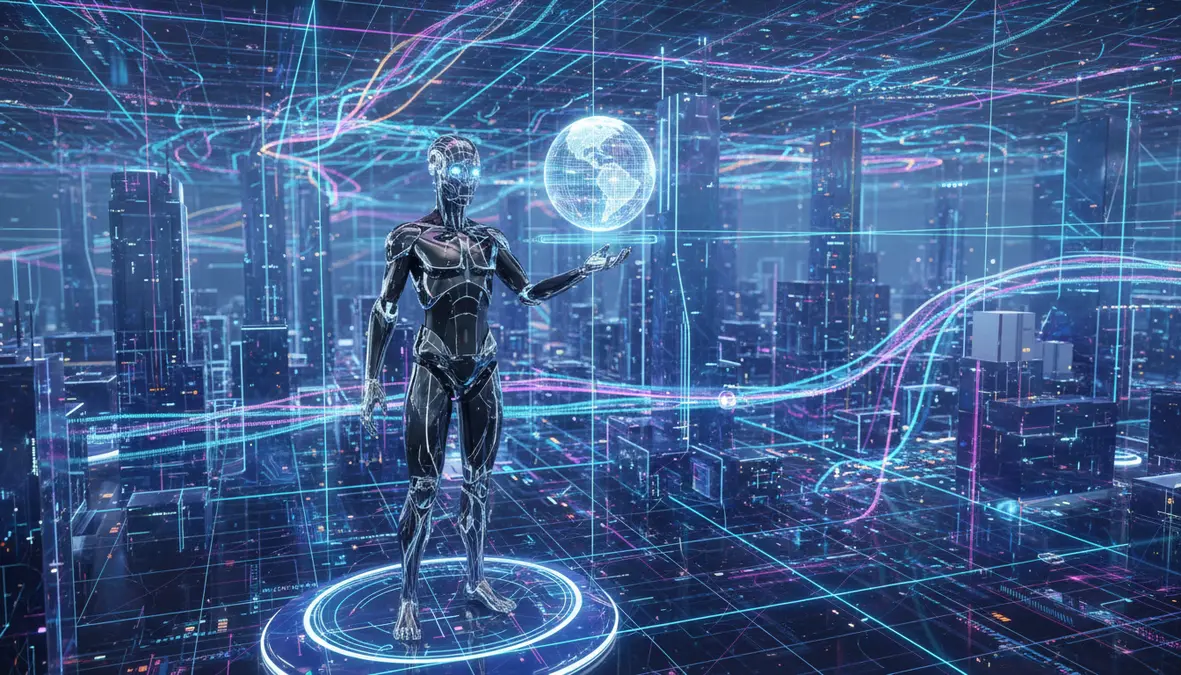

If 2023 was the year AI learned to write and 2024 was the year it learned to see and hear, 2026 is the year AI is learning something much harder: how the world actually works. A new class of models — called world models — is moving beyond language and pixels to learn the physics, geometry, and causal structure of reality itself. From Fei-Fei Li's World Labs to Google DeepMind's Genie 3, NVIDIA Cosmos, OpenAI Sora, Meta V-JEPA 2, and Runway Gen-4, the race is on to build foundation models that can simulate, predict, and reason about a three-dimensional world that persists across time. The winners will unlock everything from believable robots and self-driving cars to generative video that obeys gravity — and a whole new category of simulated training data that does not require a single real-world sensor.

What Is a World Model, Really?

A world model is an AI system that learns an internal, predictive simulation of an environment from observation. Give it a frame of video, a robot's sensor reading, or a text prompt, and it generates what the next moment could plausibly look like — not just pixels, but a latent representation in which objects persist, gravity pulls things down, liquids flow, and one object occluding another means the hidden one still exists. Psychologists call this object permanence. Roboticists call it forward dynamics. AI researchers in 2026 call it the missing layer between language models and embodied intelligence.

Large language models were trained on text, so they reason in text. World models are trained on video, 3D data, robot trajectories, and simulated physics, so they reason in space and time. The implication is enormous: a world model does not just describe a scene — it can roll it forward, imagine counterfactuals, and answer questions like "what happens if I push this cup?" without ever touching the cup. This is the cognitive substrate that robots, autonomous vehicles, and agentic AI systems have been missing.

Spatial Reasoning

World models represent 3D geometry, object permanence, and scene dynamics — not just 2D pixels — enabling reasoning about what is hidden, what is behind you, and what comes next.

Predictive Rollouts

Given a starting state, a world model simulates minutes of future dynamics in latent space, letting agents plan by imagining outcomes before acting in the real world.

Synthetic Training

Robots and self-driving stacks are now trained largely inside world-model simulations, generating millions of hours of physically plausible data at a fraction of real-world cost.

The 2026 World Model Landscape

World models is an umbrella term, and the teams building them are pursuing very different bets about which modality, architecture, and use case will win. Here is the shortlist of systems that actually matter in 2026.

| Model | Who | Approach | Flagship Use Case |

|---|---|---|---|

| World Labs LWM | Fei-Fei Li / World Labs | Large World Model generating navigable 3D scenes from a single image | Spatial intelligence for robotics and XR |

| Genie 3 | Google DeepMind | Foundation world model; playable 3D environments from text | Agent training, interactive generative media |

| Cosmos WFM | NVIDIA | Physics-aware video-generation foundation model for embodied AI | Humanoid robots, autonomous driving, simulation |

| V-JEPA 2 | Meta FAIR | Joint-embedding predictive architecture; self-supervised latent video | Sample-efficient robot policy learning |

| Sora 2 | OpenAI | Diffusion-transformer video model with emergent physics | Generative video production, storyboarding |

| Gen-4 | Runway | Controllable cinematic world simulation with persistent characters | Film, advertising, creative production |

Why Robotics Is the Killer App

The most economically important consumer of world models in 2026 is not the creative-video market — it is robotics. Every humanoid robot company on Earth (Figure, 1X, Agility, Sanctuary, Apptronik, Unitree, Tesla Optimus, Boston Dynamics) is stuck with the same problem: real-world robot data is expensive, slow to collect, and dangerous to generate at scale. Training a bipedal robot to walk, fold laundry, or stack boxes in the physical world takes thousands of hours of hardware time and eats a lot of replacement parts.

World models change the economics. NVIDIA's Cosmos platform, paired with Isaac Sim and Isaac Lab, lets robotics teams generate photorealistic, physically accurate training video on demand — varying lighting, clutter, object placement, and failure conditions in ways that would take a lifetime to collect in the real lab. Tesla built its own internal world-model simulator to generate edge-case driving scenarios for its Full Self-Driving stack. Waymo, Wayve, and Chinese autonomous-driving leaders Pony.ai and WeRide have all disclosed they now train more miles inside learned world models than they drive on public roads.

"The next generation of AI is not just about generating images or text — it is about building systems that understand space, time, and physical interaction. World models are the foundation for everything embodied."

From Sora to Genie: The Video-to-Simulator Leap

When OpenAI released Sora in early 2024, the world saw generative video that was unnervingly plausible — but also clearly limited. Objects passed through each other, hands had too many fingers, and causality was optional. Two years later, Sora 2, Google's Veo 3, and Runway's Gen-4 produce minute-long clips in which glass breaks correctly, fluids splash on contact, and characters maintain identity across shots. Not because their developers hand-coded physics — but because with enough video, the models learned physics as a side-effect of next-frame prediction.

Google DeepMind's Genie 3 pushed further in 2025, turning a text prompt into a navigable 3D environment the user could walk through. Genie 3 is not a video generator — it is a real-time world simulator, generating each frame in response to user input, with consistency across visits to the same spot. That distinction matters: a playable, interactable world model is exactly the training ground that reinforcement-learning agents need to become generalist problem-solvers without burning through real robots.

The Hard Parts: Physics, Persistence, and Long Horizons

Despite the demos, world models in 2026 have real, unsolved problems. Scene consistency over long rollouts is still fragile — objects drift, identities swap, and physics subtly degrades over seconds-to-minutes horizons. Long-tail edge cases (a child running into the street at dusk, a bag blowing under a truck) remain underrepresented in training data, which is the exact failure mode autonomous-driving stacks cannot afford. And sim-to-real transfer — getting a policy trained in a world-model simulator to work on a physical robot — still requires careful domain randomization and real-world fine-tuning.

- Persistence: Keeping object identity, scene layout, and story continuity across minutes of generation is an active research frontier.

- Physics fidelity: Learned physics is approximate; safety-critical domains still need symbolic physics solvers in the loop.

- Evaluation: There is no universally accepted benchmark for "how good is your world model" — teams use custom eval suites that are hard to compare.

- Compute cost: Training a frontier world model rivals a frontier LLM in cost; inference for interactive rollouts is even more GPU-hungry.

What This Means for Business in 2026

For most enterprises, world models will not arrive as a standalone product line — they will arrive embedded inside the next generation of tools you already use. Creative suites (Adobe Firefly, Runway, Canva) will ship persistent-character video. Product-design and CAD tools will let engineers sketch a 3D prototype from a single photo. Warehousing and logistics software will include simulated digital twins of real facilities for training picking robots. Automotive suppliers will ship pre-trained driving policies built inside closed-loop world-model simulators. Even enterprise training software is experimenting with world-model-generated immersive scenarios for safety, compliance, and sales practice.

Key Takeaways for 2026

- World models are the bridge to embodied AI. They provide the spatial, temporal, and causal priors that language models lack.

- Robotics and autonomy are the highest-value use cases. Synthetic training data generated inside world models is already cheaper and safer than real-world data collection.

- Generative video is becoming generative simulation. The leap from Sora to Genie 3 is the leap from "make a clip" to "build a playable world."

- Compute is the moat. Frontier world models rival frontier LLMs in training cost; inference for interactive use is even more GPU-intensive.

- The competitive field is already narrowing. Expect the list of foundation world-model providers to resemble the shortlist of foundation LLM providers by 2027.

For years, AI researchers described language models as the "stochastic parrot" — fluent, impressive, but ungrounded. World models are the first serious attempt to ground AI in the same physical reality humans occupy. They will not arrive all at once, and the first generation will make plenty of mistakes that would embarrass a toddler. But the direction is unmistakable: the next decade of AI progress will be measured less by how well machines write and more by how well they understand the world they are trying to act inside.