Causal AI: How Reasoning About Cause and Effect Is Unlocking Trustworthy, Decision-Ready Machine Learning in 2026

- Internet Pros Team

- May 6, 2026

- AI & Technology

Modern machine learning is brilliant at one thing — finding patterns in data — and dangerously bad at another: explaining what would happen if you actually changed something. In 2026, that gap has stopped being academic. Hospitals are running on it, regulators are auditing it, and AI agents are making decisions on top of it. The discipline closing the gap is Causal AI — a fast-maturing toolset that moves beyond correlation to model the real cause-and-effect structure of the world. It is quickly becoming the missing layer between today's prediction models and tomorrow's decision-grade AI.

Why Correlation Was Never Enough

A retention model can show that customers who open the in-app help center churn 30% less. A perfectly trained classifier can detect that hospital patients who receive aggressive ICU intervention die at higher rates. Both correlations are real, and both are wildly misleading guides to action. The first reflects the type of customer who explores help — engaged, not lazy. The second reflects who gets sent to the ICU — the sickest patients. Acting on either correlation as if it were causal leads to disastrous decisions: ban free-tier promos, defund the ICU.

This is the central tragedy of supervised learning. Models maximize predictive accuracy under the assumption that the data-generating process never changes. The moment a human, an algorithm, or a policy intervenes, the joint distribution shifts and the model's confident predictions become silently wrong. Causal AI is the formal machinery for handling exactly that shift.

"You cannot answer a causal question with a statistical model alone. You need assumptions about how the world works — and you need a language to make those assumptions explicit, testable, and falsifiable."

Pearl's Ladder of Causation

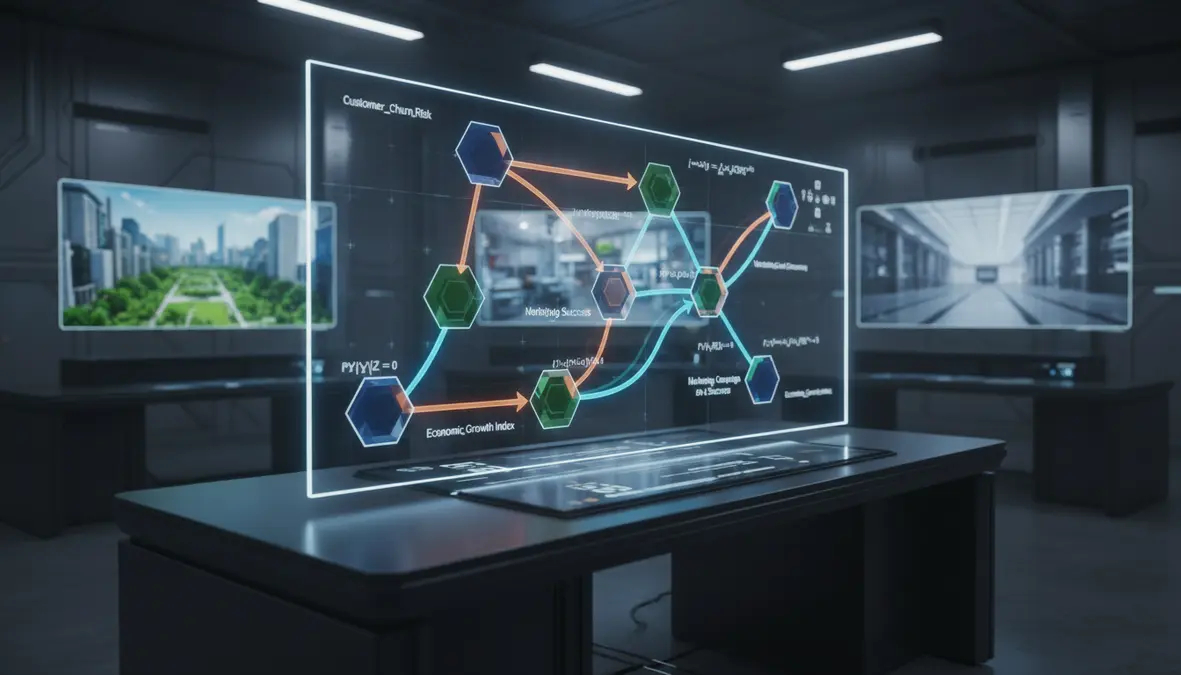

Judea Pearl's framework — now treated as foundational across the field — separates reasoning into three rungs, each strictly more powerful than the last:

Rung 1 — Association

"What is?" — classical statistics and most of deep learning. Answers questions like P(churn | help_visit). This is what every regression and transformer fundamentally does.

Rung 2 — Intervention

"What if I do X?" — answered with the do-operator: P(churn | do(help_visit)). Predicts the effect of an action, not the effect of an observation.

Rung 3 — Counterfactuals

"What would have happened?" — reasoning about an individual, hypothetical world: "Would this patient have survived if treated differently?" The hardest rung, and the one closest to human reasoning.

No amount of data alone can lift a model from one rung to the next. The leap requires causal assumptions, encoded as a directed acyclic graph (DAG) of the variables and their dependencies. The DAG is not learned from data — it is the scaffolding on top of which the data does meaningful work.

The Modern Causal AI Toolkit

A decade ago, doing causal inference outside an econometrics PhD program was painful. In 2026, an open-source ecosystem has collapsed the workflow into a handful of well-maintained libraries.

| Tool | Maintainer | What It Does in 2026 |

|---|---|---|

| DoWhy / PyWhy | Microsoft + community | Reference 4-step framework: Model, Identify, Estimate, Refute. The de facto standard for end-to-end causal analysis in Python. |

| EconML | Microsoft Research (ALICE) | Heterogeneous treatment effects via Double Machine Learning, Causal Forests, Doubly Robust Learners, DR-OrthoForest, DeepIV. |

| CausalML | Uber | Uplift modeling and CATE estimation at hyperscale; battle-tested on Uber pricing, marketing, and Eats incentives. |

| CausalNex | QuantumBlack / McKinsey | Bayesian-network learning with NOTEARS-based causal discovery and what-if scenario simulation for executives. |

| causaLens decisionOS | causaLens | Enterprise platform combining causal discovery, decision agents, and counterfactual explanations in a low-code interface. |

| CausalImpact | Bayesian structural time-series for measuring the effect of interventions when no clean control group exists. | |

| cdt / gCastle | Huawei Noah's Ark | Modern causal discovery — PC, GES, FCI, LiNGAM, NOTEARS, GraN-DAG — at scale on tabular and time-series data. |

Where Causal AI Is Already Earning Its Keep

The 2026 production landscape is no longer a slide deck. Causal methods are the backbone of decisions that affect millions of dollars and millions of lives:

- Healthcare and pharma. Roche, Novartis, and AstraZeneca use causal inference on real-world evidence (RWE) to estimate treatment effects when an RCT would be unethical or impossibly slow. The FDA's ICH E9(R1) estimand framework is now built into trial design from the first protocol draft.

- Marketing and pricing. Uber, Lyft, Booking.com, and Spotify run thousands of uplift models in parallel to identify which users will respond to a discount only when prompted — the persuadables — and avoid wasting margin on customers who would have converted anyway.

- Reliability engineering. Causal root-cause analysis on observability data — Datadog Bits AI, New Relic Causal AI, Splunk ML, and the open-source Chaos Engineering toolchain — collapses MTTR by isolating the upstream signal that caused the SLO breach instead of the dozens of correlated downstream alarms.

- Credit and fraud. Banks use counterfactual explanations under EU AI Act Article 86 and ECOA to tell a denied applicant exactly what minimal change would have flipped the decision — a requirement no SHAP-only model can satisfy.

- Public policy and economics. The IMF, World Bank, and central banks use synthetic-control methods, regression discontinuity, and difference-in-differences to evaluate stimulus, tax, and minimum-wage policies in near-real-time.

The Causal Discovery Frontier

Until recently, the DAG had to be hand-drawn by a domain expert — a brittle, slow, and politically charged process inside large organizations. The breakthrough of the last three years has been differentiable causal discovery: algorithms like NOTEARS, DAG-GNN, GraN-DAG, and SCORE recast the search for the right graph as a continuous optimization problem solvable with gradient descent. With observational data plus modest expert priors, modern discovery pipelines now propose candidate DAGs that human experts then refine — turning what was a six-month consulting engagement into an afternoon of iteration.

In parallel, causal representation learning — pioneered by Bernhard Schölkopf and Yoshua Bengio — is teaching neural networks to extract latent causal variables directly from raw images, text, and sensor streams, bridging deep learning and structural causal models for the first time. Early 2026 results from Mila, Max Planck, and DeepMind suggest this will be the path to causal foundation models.

Why LLM Agents Need Causality

The hottest application area for causal reasoning in 2026 is, surprisingly, agentic AI. An LLM agent that books flights, files insurance claims, or runs a marketing campaign is constantly choosing actions whose consequences feed back into the world. Pure next-token-prediction does not produce reliable plans for that kind of intervention — agents trained only on associations confidently chain actions that look plausible token-by-token but compound errors causally.

Hybrid stacks are emerging. Anthropic's research on causal scrubbing for interpretability, Google DeepMind's causal-aware planners, and startups like causaLens, Geminos AI, and Inferenz are wrapping LLMs with explicit causal world models that constrain action selection. The pattern looks a lot like the System 1 / System 2 split in robotics VLA models: a fast neural pattern-matcher proposes, a slow causal model validates.

A Practical Causal AI Adoption Guide

- Start with one decision, not one dataset. Pick a high-stakes business action — pricing, churn intervention, drug dosage — where being wrong is expensive. The causal question writes itself.

- Draw the DAG before you touch the data. A whiteboard with five nodes and a dozen arrows beats an ML notebook every time. This is where domain experts add value that no model can.

- Use DoWhy's 4-step workflow. Model → Identify → Estimate → Refute. The refutation tests (placebo treatments, random common causes, subset robustness) are what separate a real causal claim from a flattering p-value.

- Layer causality on top of ML, not instead of it. Use deep learning for the nuisance functions inside Double Machine Learning. The two paradigms are complementary.

- Demand counterfactual explanations. If a vendor's "explainable AI" cannot answer "what minimal change would have flipped this decision?" — it is not yet ready for high-stakes deployment.

The Decade of Decision-Grade AI

For ten years, the AI community has been racing to scale up association-level intelligence. The results have been spectacular and incomplete. Generative models compose, summarize, and code at near-human levels but cannot reliably tell a user what would happen if they took a different action in the real world. That is not a bug — it is a structural limit of the rung they live on.

Causal AI is the upgrade path. It does not replace deep learning; it gives deep learning the assumptions, the operator, and the language it needs to step from seeing to deciding. As the EU AI Act's explainability obligations land in 2026, as real-world evidence eats RCT-only drug approval, and as autonomous agents start taking real actions on real money, the organizations that have invested in causal capability will look obvious in retrospect — the way the cloud-native ones did a decade ago.

The next leap in machine intelligence is not bigger models. It is smarter questions. Causal AI is the discipline of asking them — and, finally, of answering them with rigor.