Tactile Robotics and Electronic Skin (E-Skin): How AI-Powered Touch Sensors Are Giving Robots a Sense of Feel in 2026

- Internet Pros Team

- May 7, 2026

- AI & Technology

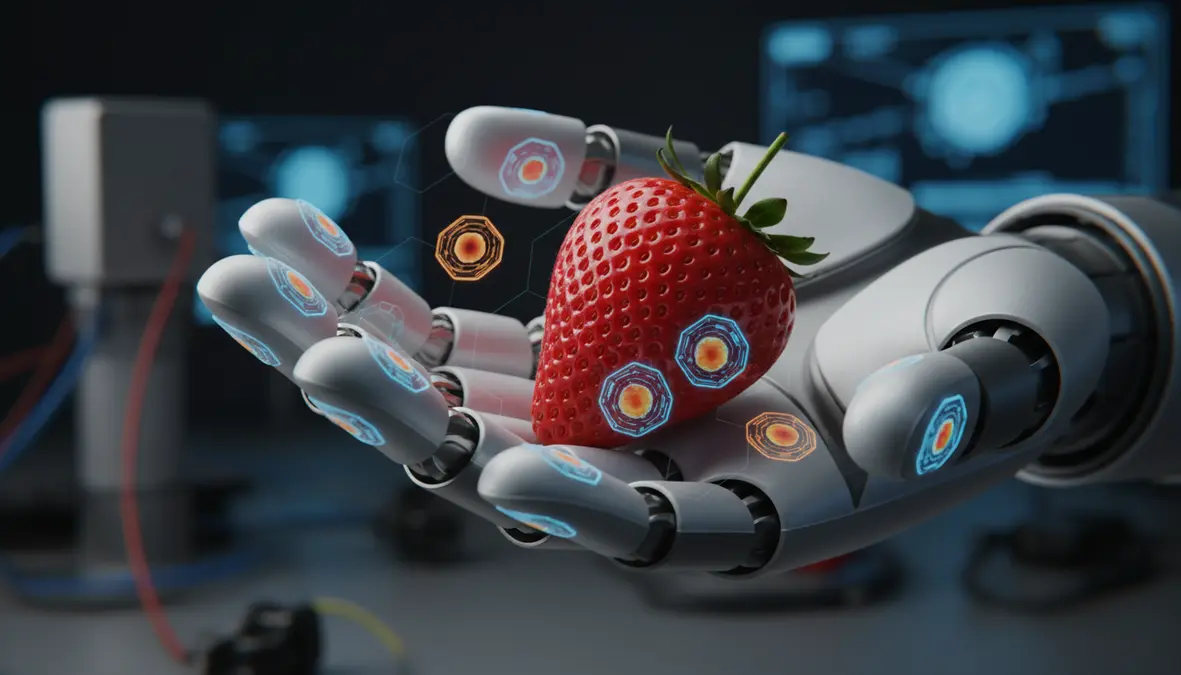

Robots in 2026 can see better than humans, talk like humans, and increasingly walk like humans — but for decades they have been almost entirely numb. The missing sense, the one that lets a child pick up an egg without crushing it and a surgeon tie a knot in a beating heart, is finally arriving. Tactile robotics and electronic skin (e-skin) have become the breakout robotics story of 2026, turning the cold metal hands of humanoids into surfaces that can feel pressure, slip, vibration, temperature, and texture — and pairing that signal stream with foundation-model AI that finally knows what to do with it.

Why Touch Was the Hardest Sense for Machines

Vision and audio were always going to fall first. They are channels that can be sampled at a distance, with a single sensor, and stored as clean two-dimensional grids that convolutional and transformer models could chew on. Touch broke every one of those assumptions. It is a contact sense — the data does not exist until two surfaces meet. It is high-dimensional — a single fingertip pressing into fabric produces shear, normal force, micro-vibration, and thermal flux, all at once, all at kilohertz timescales. And it is spatial in the most awkward way possible: a useful tactile signal is distributed across the entire body of the robot, wraps around curved surfaces, and has to survive being slammed into walls, dropped, washed, and crushed.

For thirty years, robotics labs tried and largely failed to engineer artificial skin that combined high resolution, mechanical robustness, and a manageable wiring count. The breakthrough of the last 24 months has been a shift in mindset: rather than reinventing biology, treat tactile data the way the rest of AI now treats every other modality — as tokens that a transformer can learn from at scale.

"You cannot bolt dexterity onto a robot after the fact. Touch has to be a first-class input — sampled densely, fused with vision and language, and trained jointly. The robots that feel are the robots that work."

The Four Tactile Sensing Families Powering 2026

There is no single winning sensor — modern humanoid platforms layer multiple technologies because each one excels at a different physical phenomenon. Understanding the trade-offs is now table stakes for any robotics team:

Vision-Based Tactile

An internal camera watches a soft elastomer deform against the world. GelSight, Meta's DIGIT and the panoramic DIGIT 360, and the open-source ReSkin / AnySkin projects deliver sub-millimeter spatial resolution and rich shear maps. Best for fingertip dexterity, manipulation, and texture identification.

Capacitive Arrays

Thin, flexible, scalable to large coverage areas. Used by Pressure Profile Systems, Tekscan, and BeBop Sensors for full-arm and torso sleeves. The default choice for "where am I being touched" across humanoid bodies and collaborative robot skins.

Piezoresistive & MEMS

Cheap, fast, and tolerant to overload. XELA Robotics uSkin and Sensel arrays embed tiny force-sensitive resistors into modular tiles. Excellent for high-frequency vibration and slip detection — the difference between holding a glass and dropping it.

Magnetic Tactile

A small magnet floats in a soft polymer over a Hall-effect array. The ReSkin family pioneered by Carnegie Mellon and Meta AI is replaceable, washable, and survives thousands of contact cycles — the durability problem that killed earlier e-skins.

Who Is Shipping Touch in 2026

A year ago, tactile sensing was confined to research papers and a handful of specialty hands. In 2026, it is being baked into shipping humanoid platforms.

| Platform / Vendor | Tactile Stack | Where It Is Deployed |

|---|---|---|

| Meta AI (FAIR) | DIGIT 360, Sparsh tactile encoder, open Sparsh-X foundation model | Reference research stack — open-sourced and adopted across academia. |

| Tesla Optimus Gen 3 | Capacitive fingertip pads + force/torque at every joint, Dojo-trained tactile policies | Battery cell handling, fastener insertion, and parts kitting in Tesla factories. |

| Figure 02 | Custom multilayer fingertip sensors fused with Helix VLA model | BMW Spartanburg plant — sheet-metal placement and load handling. |

| Sanctuary AI Phoenix | Hydraulic-tactile micro-actuators with proprioceptive force sensing in every finger | Pick-and-place and contact-rich assembly across Magna and other partners. |

| 1X NEO | Soft body shell with full-coverage capacitive skin, fingertip GelSight-style optical pads | Home assistance — laundry, dishes, gentle human interaction. |

| Shadow Robot / Wonik Allegro | BioTac SP, XELA uSkin, NeuroTac neuromorphic pads | Dexterous research hands powering most published manipulation benchmarks. |

| Intuitive da Vinci 5 | Force-feedback instrument tips with sub-Newton resolution | First mass-deployed surgical robot to deliver true haptic feedback to the surgeon. |

Tactile Foundation Models: The AI Half of the Story

Hardware alone has never made a robot useful. The real unlock in 2026 is that tactile signals are finally being treated as a first-class modality inside large multimodal models. Meta's Sparsh family of self-supervised tactile encoders pre-trains on hundreds of millions of unlabeled DIGIT readings, much the way DINOv2 did for vision. CMU and Berkeley's T3 and Touch-Vision-Language models contrast tactile patches against image and text descriptions, producing embeddings that transfer across sensors.

These tactile encoders plug directly into Vision-Language-Action (VLA) models like Physical Intelligence π0.5, NVIDIA GR00T N1.5, and Google DeepMind Gemini Robotics. The result is a single robot brain that takes camera images, language instructions, and tactile feedback, and emits joint commands at 30-100 Hz. A robot that previously had to look at the egg before deciding to squeeze it can now feel the shell start to fracture and back off mid-grasp.

Equally important is the rise of neuromorphic tactile processing. Spiking neural networks running on Intel Loihi 2, BrainChip Akida, and IBM NorthPole chips process kilohertz tactile streams at milliwatt power budgets — a critical enabler for battery-powered humanoids and prosthetic limbs that cannot afford to stream gigabytes of touch data over Wi-Fi.

Where Tactile Robotics Is Already Earning Its Keep

- Manufacturing assembly. Contact-rich tasks — peg-in-hole insertion, snap fits, cable routing, fastener torquing — were the long tail that defeated traditional vision-only automation. Tactile-aware Optimus and Figure units are now closing those gaps in automotive and electronics plants.

- Surgical robotics. Intuitive's da Vinci 5, CMR Surgical Versius, and Medtronic Hugo are integrating force feedback that lets surgeons feel suture tension and tissue compliance again — capabilities that vanished when minimally invasive surgery moved through rigid trocars.

- Prosthetics. Esper Bionics, Atom Limbs, and Open Bionics are wiring e-skin signals back into amputees' nervous systems via targeted muscle reinnervation, restoring not just grip but graded sensation. Early users describe it as the first time their prosthesis felt like part of them.

- Logistics and retail. Amazon Robotics, Symbotic, and Covariant's tactile-equipped pickers handle deformable packaging — bagged produce, plastic clamshells, soft polybags — that defeated suction grippers for a decade.

- Home robots. 1X NEO and π0.5-driven platforms use whole-body capacitive skins for safe physical human-robot interaction, sensing the difference between a leash tug and a child leaning into the chassis.

- Teleoperation and the tactile internet. 5G-Advanced and emerging 6G specifications are finally meeting the sub-1 ms latency budget required for haptic round-trips, opening the door to remote surgery, hazardous-environment work, and skill teleoperation for training humanoid policies at scale.

The Open Benchmarks Driving the Field

As with every other corner of modern AI, progress now rides on shared datasets and reproducible evaluation. The Open Tactile Dataset, Meta's Touch-Vision-Language-Action corpus, the RoboTac Challenge at IROS 2025, and NIST's emerging robot-touch metrology suite have done for tactile what ImageNet and Open X-Embodiment did for vision and manipulation. Cross-sensor generalization — training on DIGIT and zero-shotting onto a uSkin tile — is becoming a published benchmark rather than a moonshot demo.

Choosing a Tactile Stack: A Practical Guide

- Match the sensor to the contact event. Slip detection wants high-frequency piezoresistive or magnetic sensors. Texture and shape ID wants vision-based GelSight-style optics. Whole-body safety wants large-area capacitive sleeves.

- Plan for replacement, not repair. Tactile skins wear out. The platforms that win are the ones designed for snap-in replacement of fingertip and palm modules — exactly the design pattern AnySkin demonstrated.

- Adopt the open foundation models. Sparsh, T3, and Touch-Vision-Language give you a 100,000-fold head start on data. Fine-tuning beats from-scratch tactile training in nearly every published study.

- Budget for compute at the edge. A full-body humanoid skin can produce gigabits per second. Local neuromorphic or DSP-class processing is increasingly mandatory.

- Test in contact-rich, not free-space, scenarios. A demo that grasps cubes from a table tells you nothing. Wiping, twisting, sliding, inserting, and assembling are the tasks that separate real tactile competence from theatre.

The Decade of Embodied Intelligence Just Got Its Missing Sense

For the entire history of robotics, the easiest way to make a robot fail has been to ask it to do something delicate. That ceiling is finally cracking. The combination of dense, durable e-skins, tactile foundation models, and Vision-Language-Action policies that fuse touch with sight and language is producing the first generation of machines that handle the physical world with anything close to human sensitivity.

There is a lot of road left. Tactile data still drowns in noise; cross-platform generalization is fragile; the safety implications of robots that feel us back have barely been explored by regulators. But the shape of the next robotics decade is now clear. Vision gave robots eyes. Language gave them instructions. Touch is what is finally giving them hands — and with hands, the entire physical economy becomes addressable.

The robots that ship in 2027 and beyond will be defined less by how many degrees of freedom they have and more by how well they feel. In tactile robotics, the field has found the last great missing modality — and the race to instrument every fingertip, palm, and chassis on Earth is officially on.